AI Visibility for Multifamily: Why Most AEO Tools Miss the Mark | The Unlock

Why most AEO tools don't work for multifamily

Getting in front of the hundreds of millions of monthly active users on ChatGPT, Claude and Gemini is a big deal and becoming an increasingly important source to drive organic leads and even to maximize efficiency of existing ILS spend. None of this is a secret anymore.

Your SEO vendor knows this. And there’s a budding crop of “AEO tools” that also purport to be able to give the same lens into whether communities are appearing on these AI platforms.

We recently analyzed nearly 2,000 multifamily competitors across 326,000 data points to better understand what makes AI models recommend one apartment over another. We published the findings in the AI Discovery Black Book and one of the clearest takeaways is that most AEO/GEO tools on the market aren’t measuring what actually matters.

Pitfalls we've found with many AEO/GEO tools

Most AI visibility tools are built on keyword search, which is the logic that powers traditional SEO. Does this term match what's on your website? If so, where does it rank? The problem is that the framework doesn’t translate to how AI models actually work. Here’s where they lose the plot:

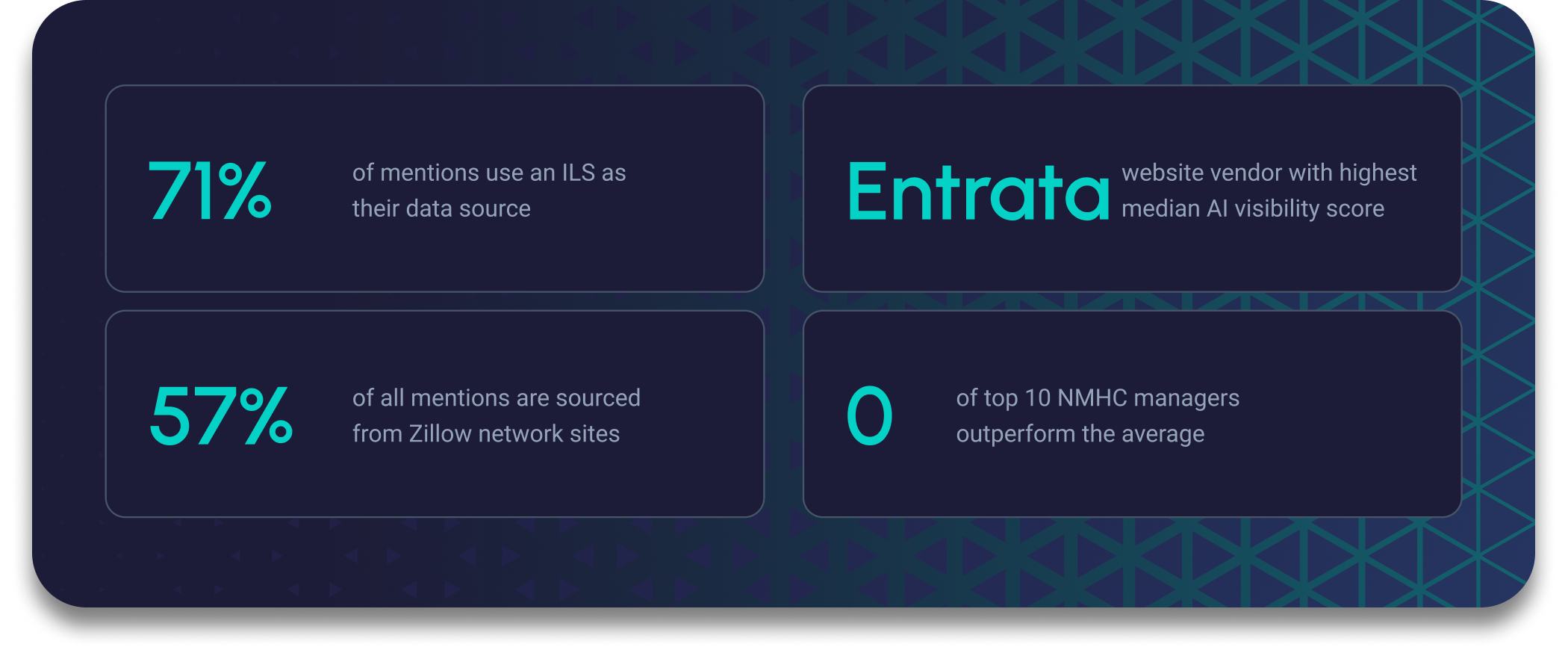

1. They are overfocused on your domain. Our research shows that more than 70% of AI mentions don’t come from your property website. Large language models (LLMs) pull from multiple sources, with the largest weight coming from ILSs like Zillow and Apartments.com. An AEO tool that’s only monitoring your domain is missing most of the picture and can’t tell you which of these listing sources you're doing well on and which ones need work.

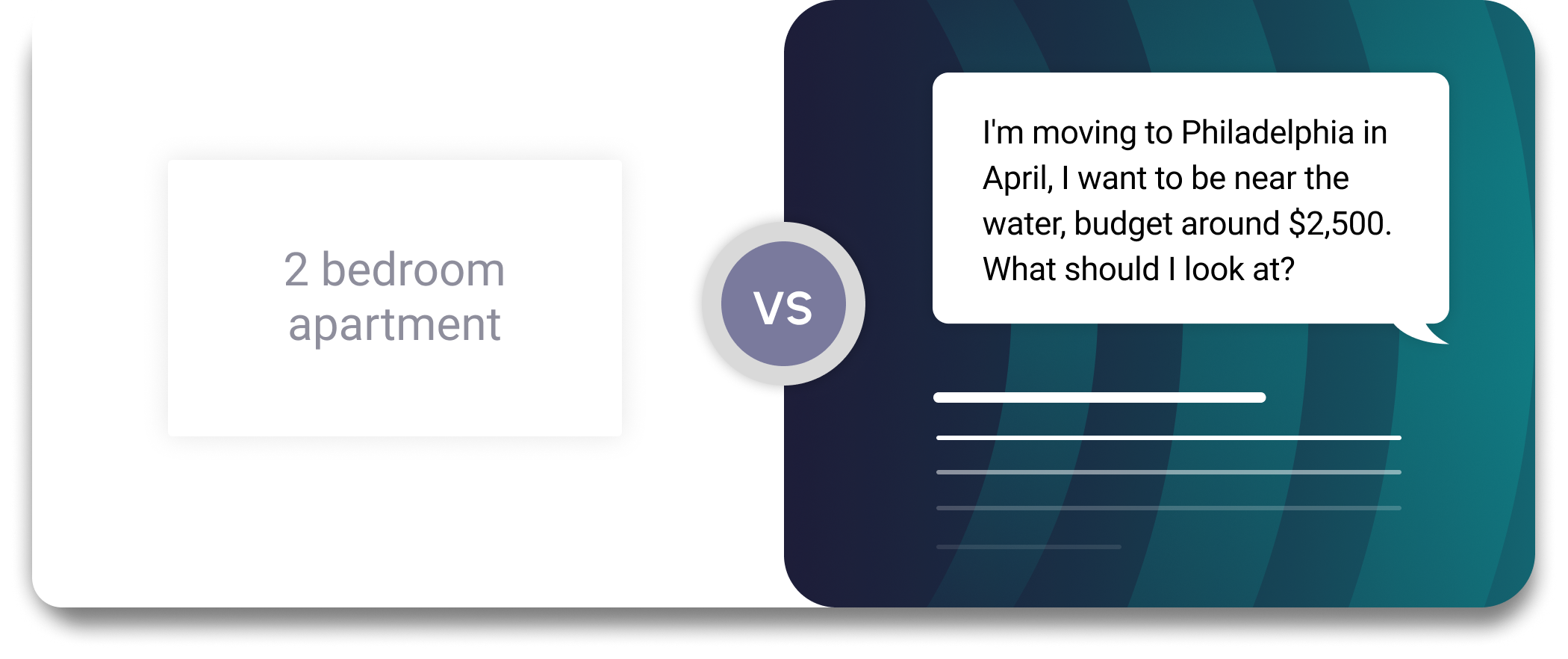

2. They track keywords but LLMs are not search engines, they’re answer engines. When a renter has discussed a dog-friendly one-bedroom near the Red Line with in-unit laundry, the model isn't matching keywords. It's interpreting intent and surfacing the listings with enough detail to actually meet those needs. A keyword tracker has no way to measure whether your communities are winning in that context.

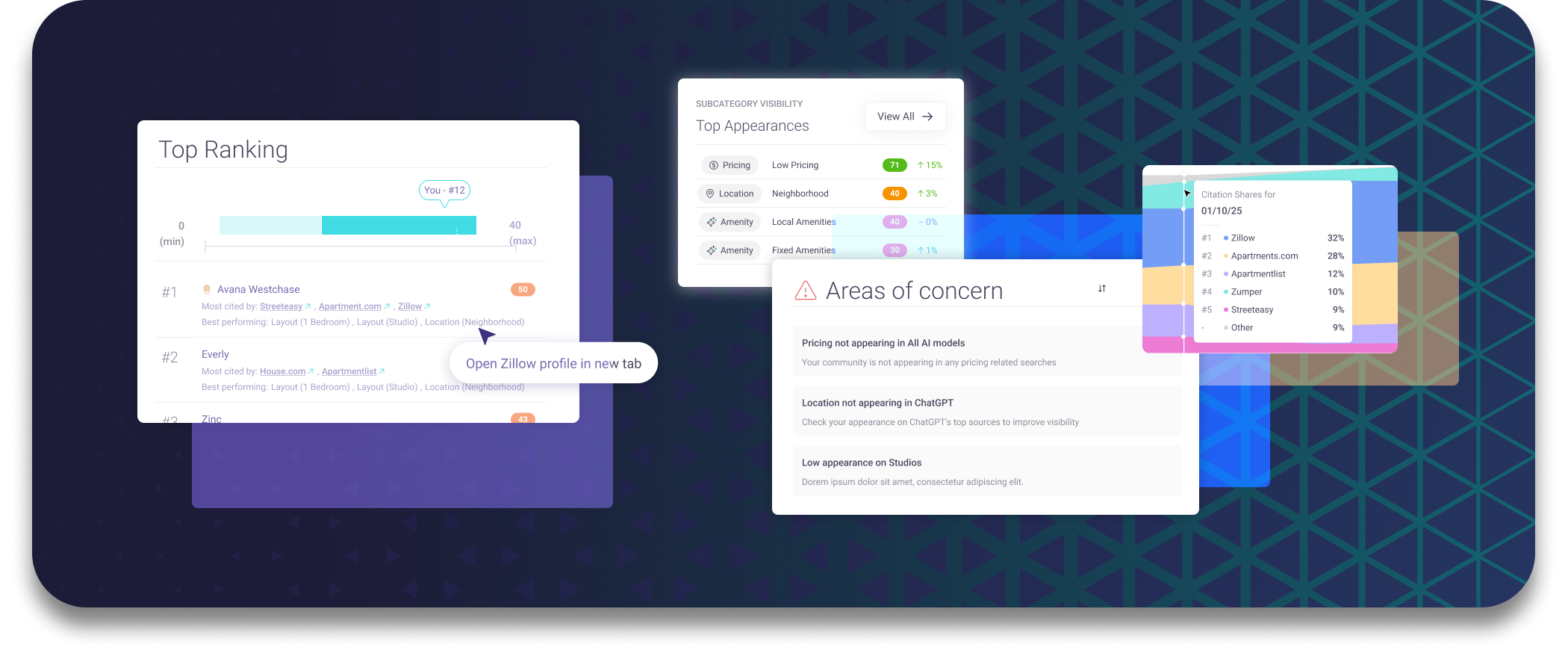

3. They test a handful of keywords — but the model checks dozens of subtopics: AI models take a single prospect prompt and fan it out behind the scenes into multiple sub-queries, checking for rent specials, parking details, pet policies, unit-specific features, and assemble a response from whatever it can find. If your listings don't have the depth to answer those sub-queries, your community won’t show up as the answer.

Community-level visibility is what actually matters

The question that actually fills apartments isn't "Does ChatGPT know who we are?" It's "When a renter describes what they need, do our individual communities show up?" That means measuring visibility at the community and unit level: across your property website, your Zillow listing, your Apartments.com profile, your RentCafe page. Not just whether the AI model recognizes your portfolio name.

Across 416 assessments in the Black Book, there are clear patterns between winners and losers, and the early-mover advantage is strong. Our analysis shows that 75% of communities never show up in conversations with AI engines. And the top 5% who do are getting over half of all mentions.

Some of the highest-performing PMCs manage modest portfolios while some of the largest operators barely register. In fact, zero of the top NMHC PMCs outperform the average community. That's worth sitting with: the biggest portfolios in the country are underperforming the midpoint. Portfolio size doesn't predict visibility. Community-level execution does.

The Black Book covers all of it: data sources, LLM differences, website vendors, regional breakdowns, and which operators are winning. Read it here.

If you're evaluating AI visibility tools, start by asking one question: can I measure at the community level? Peek Discover was the first tool launched specifically to monitor AI visibility for multifamily properties — grab a free score if you want to see what it looks like for your portfolio.